Building and Running ExecuTorch on Xtensa HiFi4 DSP¶

In this tutorial we will walk you through the process of getting setup to build ExecuTorch for an Xtensa HiFi4 DSP and running a simple model on it.

Cadence is both a hardware and software vendor, providing solutions for many computational workloads, including to run on power-limited embedded devices. The Xtensa HiFi4 DSP is a Digital Signal Processor (DSP) that is optimized for running audio based neural networks such as wake word detection, Automatic Speech Recognition (ASR), etc.

In addition to the chip, the HiFi4 Neural Network Library (nnlib) offers an optimized set of library functions commonly used in NN processing that we utilize in this example to demonstrate how common operations can be accelerated.

On top of being able to run on the Xtensa HiFi4 DSP, another goal of this tutorial is to demonstrate how portable ExecuTorch is and its ability to run on a low-power embedded device such as the Xtensa HiFi4 DSP. This workflow does not require any delegates, it uses custom operators and compiler passes to enhance the model and make it more suitable to running on Xtensa HiFi4 DSPs. A custom quantizer is used to represent activations and weights as uint8 instead of float, and call appropriate operators. Finally, custom kernels optimized with Xtensa intrinsics provide runtime acceleration.

In this tutorial you will learn how to export a quantized model with a linear operation targeted for the Xtensa HiFi4 DSP.

You will also learn how to compile and deploy the ExecuTorch runtime with the kernels required for running the quantized model generated in the previous step on the Xtensa HiFi4 DSP.

Note

The linux part of this tutorial has been designed and tested on Ubuntu 22.04 LTS, and requires glibc 2.34. Workarounds are available for other distributions, but will not be covered in this tutorial.

Prerequisites (Hardware and Software)¶

In order to be able to succesfully build and run ExecuTorch on a Xtensa HiFi4 DSP you’ll need the following hardware and software components.

Hardware¶

Software¶

x86-64 Linux system (For compiling the DSP binaries)

-

This IDE is supported on multiple platforms including MacOS. You can use it on any of the supported platforms as you’ll only be using this to flash the board with the DSP images that you’ll be building later on in this tutorial.

-

Needed to flash the board with the firmware images. You can install this on the same platform that you installed the MCUXpresso IDE on.

Note: depending on the version of the NXP board, another probe than JLink might be installed. In any case, flashing is done using the MCUXpresso IDE in a similar way.

-

Download this SDK to your Linux machine, extract it and take a note of the path where you store it. You’ll need this later.

-

Download this to your Linux machine. This is needed to build ExecuTorch for the HiFi4 DSP.

For cases with optimized kernels, the nnlib repo.

Setting up Developer Environment¶

Step 1. In order to be able to successfully install all the software components specified above users will need to go through the NXP tutorial linked below. Although the tutorial itself walks through a Windows setup, most of the steps translate over to a Linux installation too.

NXP tutorial on setting up the board and dev environment

Note

Before proceeding forward to the next section users should be able to succesfullly flash the dsp_mu_polling_cm33 sample application from the tutorial above and notice output on the UART console indicating that the Cortex-M33 and HiFi4 DSP are talking to each other.

Step 2. Make sure you have completed the ExecuTorch setup tutorials linked to at the top of this page.

Working Tree Description¶

The working tree is:

examples/xtensa/

├── aot

├── kernels

├── ops

├── third-party

└── utils

AoT (Ahead-of-Time) Components:

The AoT folder contains all of the python scripts and functions needed to export the model to an ExecuTorch .pte file. In our case, export_example.py defines a model and some example inputs (set to a vector of ones), and runs it through the quantizer (from quantizer.py). Then a few compiler passes, also defined in quantizer.py, will replace operators with custom ones that are supported and optimized on the chip. Any operator needed to compute things should be defined in meta_registrations.py and have corresponding implemetations in the other folders.

Operators:

The operators folder contains two kinds of operators: existing operators from the ExecuTorch portable library and new operators that define custom computations. The former is simply dispatching the operator to the relevant ExecuTorch implementation, while the latter acts as an interface, setting up everything needed for the custom kernels to compute the outputs.

Kernels:

The kernels folder contains the optimized kernels that will run on the HiFi4 chip. They use Xtensa intrinsics to deliver high performance at low-power.

Build¶

In this step, you will generate the ExecuTorch program from different models. You’ll then use this Program (the .pte file) during the runtime build step to bake this Program into the DSP image.

Simple Model:

The first, simple model is meant to test that all components of this tutorial are working properly, and simply does an add operation. The generated file is called add.pte.

cd executorch

python3 -m examples.portable.scripts.export --model_name="add"

Quantized Linear:

The second, more complex model is a quantized linear operation. The model is defined here. Linear is the backbone of most Automatic Speech Recognition (ASR) models.

The generated file is called XtensaDemoModel.pte.

cd executorch

python3 -m examples.xtensa.aot.export_example

Runtime¶

Building the DSP firmware image In this step, you’ll be building the DSP firmware image that consists of the sample ExecuTorch runner along with the Program generated from the previous step. This image when loaded onto the DSP will run through the model that this Program consists of.

Step 1. Configure the environment variables needed to point to the Xtensa toolchain that you have installed in the previous step. The three environment variables that need to be set include:

# Directory in which the Xtensa toolchain was installed

export XTENSA_TOOLCHAIN=/home/user_name/xtensa/XtDevTools/install/tools

# The version of the toolchain that was installed. This is essentially the name of the directory

# that is present in the XTENSA_TOOLCHAIN directory from above.

export TOOLCHAIN_VER=RI-2021.8-linux

# The Xtensa core that you're targeting.

export XTENSA_CORE=nxp_rt600_RI2021_8_newlib

Step 2. Clone the nnlib repo, which contains optimized kernels and primitives for HiFi4 DSPs, with git clone git@github.com:foss-xtensa/nnlib-hifi4.git.

Step 3. Run the CMake build. In order to run the CMake build, you need the path to the following:

The Program generated in the previous step

Path to the NXP SDK root. This should have been installed already in the Setting up Developer Environment section. This is the directory that contains the folders such as boards, components, devices, and other.

cd executorch

rm -rf cmake-out

# prebuild and install executorch library

cmake -DCMAKE_TOOLCHAIN_FILE=<path_to_executorch>/examples/cadence/cadence.cmake \

-DCMAKE_INSTALL_PREFIX=cmake-out \

-DCMAKE_BUILD_TYPE=Debug \

-DEXECUTORCH_BUILD_HOST_TARGETS=ON \

-DEXECUTORCH_BUILD_EXECUTOR_RUNNER=OFF \

-DEXECUTORCH_BUILD_FLATC=OFF \

-DFLATC_EXECUTABLE="$(which flatc)" \

-DEXECUTORCH_BUILD_EXTENSION_RUNNER_UTIL=ON \

-DPYTHON_EXECUTABLE=python3 \

-Bcmake-out .

cmake --build cmake-out -j8 --target install --config Debug

# build xtensa runner

cmake -DCMAKE_BUILD_TYPE=Debug \

-DCMAKE_TOOLCHAIN_FILE=<path_to_executorch>/examples/xtensa/xtensa.cmake \

-DCMAKE_PREFIX_PATH=<path_to_executorch>/cmake-out \

-DMODEL_PATH=<path_to_program_file_generated_in_previous_step> \

-DNXP_SDK_ROOT_DIR=<path_to_nxp_sdk_root> -DEXECUTORCH_BUILD_FLATC=0 \

-DFLATC_EXECUTABLE="$(which flatc)" \

-DNN_LIB_BASE_DIR=<path_to_nnlib_cloned_in_step_2> \

-Bcmake-out/examples/xtensa \

examples/xtensa

cmake --build cmake-out/examples/xtensa -j8 -t xtensa_executorch_example

After having succesfully run the above step you should see two binary files in their CMake output directory.

> ls cmake-xt/*.bin

cmake-xt/dsp_data_release.bin cmake-xt/dsp_text_release.bin

Deploying and Running on Device¶

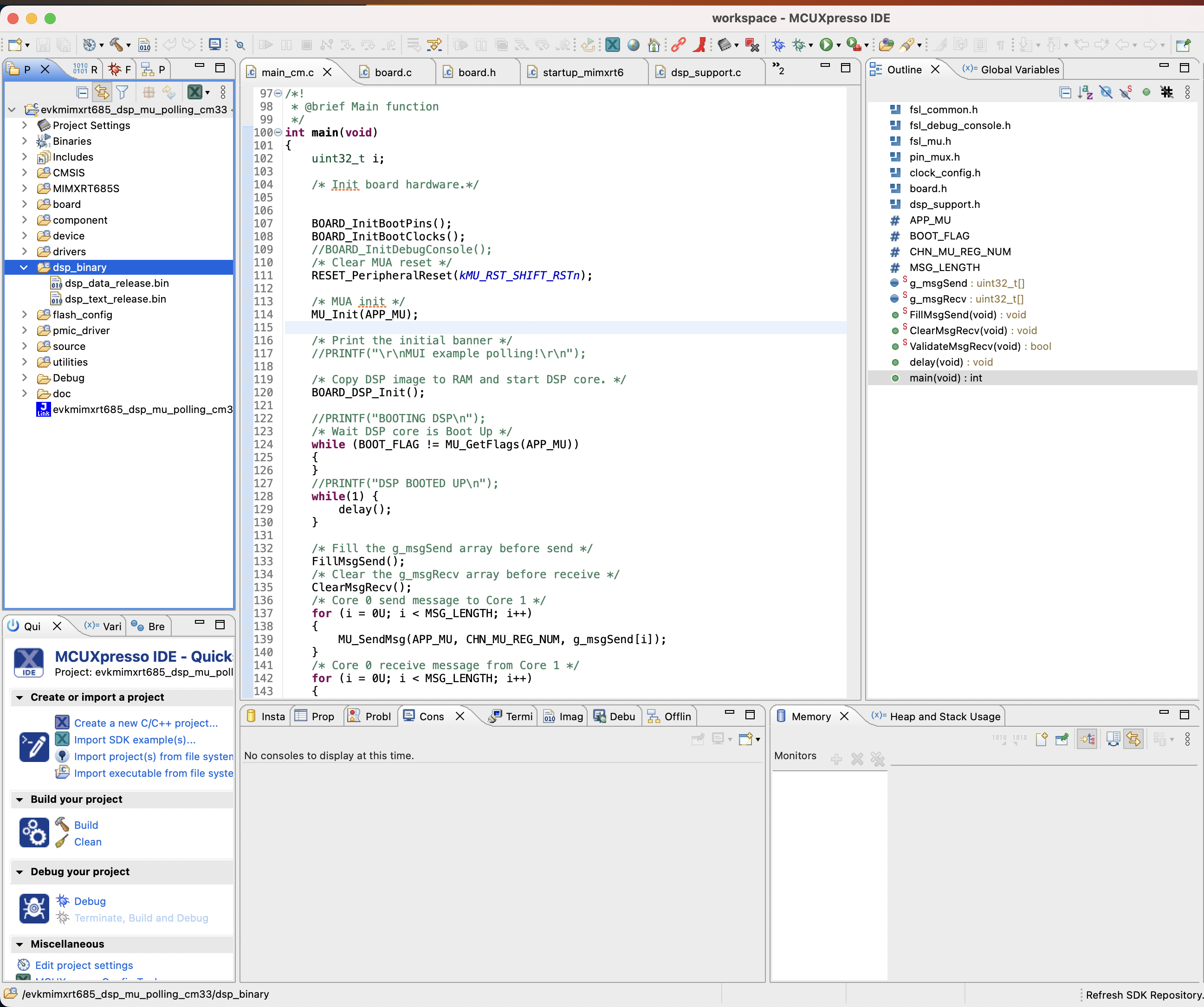

Step 1. You now take the DSP binary images generated from the previous step and copy them over into your NXP workspace created in the Setting up Developer Environment section. Copy the DSP images into the dsp_binary section highlighted in the image below.

Note

As long as binaries have been built using the Xtensa toolchain on Linux, flashing the board and running on the chip can be done only with the MCUXpresso IDE, which is available on all platforms (Linux, MacOS, Windows).

Step 2. Clean your work space

Step 3. Click Debug your Project which will flash the board with your binaries.

On the UART console connected to your board (at a default baud rate of 115200), you should see an output similar to this:

> screen /dev/tty.usbmodem0007288234991 115200

Executed model

Model executed successfully.

First 20 elements of output 0

0.165528 0.331055 ...

Conclusion and Future Work¶

In this tutorial, you have learned how to export a quantized operation, build the ExecuTorch runtime and run this model on the Xtensa HiFi4 DSP chip.

The model in this tutorial is a typical operation appearing in ASR models, and can be extended to a complete ASR model by creating the model in export_example.py and adding the needed operators/kernels to operators and kernels.

Other models can be created following the same structure, always assuming that operators and kernels are available.