Note

Click here to download the full example code

Learn the Basics || Quickstart || Tensors || Datasets & DataLoaders || Transforms || Build Model || Autograd || Optimization || Save & Load Model

Datasets & DataLoaders¶

Code for processing data samples can get messy and hard to maintain; we ideally want our dataset code

to be decoupled from our model training code for better readability and modularity.

PyTorch provides two data primitives: torch.utils.data.DataLoader and torch.utils.data.Dataset

that allow you to use pre-loaded datasets as well as your own data.

Dataset stores the samples and their corresponding labels, and DataLoader wraps an iterable around

the Dataset to enable easy access to the samples.

PyTorch domain libraries provide a number of pre-loaded datasets (such as FashionMNIST) that

subclass torch.utils.data.Dataset and implement functions specific to the particular data.

They can be used to prototype and benchmark your model. You can find them

here: Image Datasets,

Text Datasets, and

Audio Datasets

Loading a Dataset¶

Here is an example of how to load the Fashion-MNIST dataset from TorchVision. Fashion-MNIST is a dataset of Zalando’s article images consisting of 60,000 training examples and 10,000 test examples. Each example comprises a 28×28 grayscale image and an associated label from one of 10 classes.

- We load the FashionMNIST Dataset with the following parameters:

rootis the path where the train/test data is stored,trainspecifies training or test dataset,download=Truedownloads the data from the internet if it’s not available atroot.transformandtarget_transformspecify the feature and label transformations

import torch

from torch.utils.data import Dataset

from torchvision import datasets

from torchvision.transforms import ToTensor

import matplotlib.pyplot as plt

training_data = datasets.FashionMNIST(

root="data",

train=True,

download=True,

transform=ToTensor()

)

test_data = datasets.FashionMNIST(

root="data",

train=False,

download=True,

transform=ToTensor()

)

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/train-images-idx3-ubyte.gz

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/train-images-idx3-ubyte.gz to data/FashionMNIST/raw/train-images-idx3-ubyte.gz

0%| | 0/26421880 [00:00<?, ?it/s]

0%| | 65536/26421880 [00:00<01:12, 363653.80it/s]

1%| | 229376/26421880 [00:00<00:38, 683094.28it/s]

3%|2 | 753664/26421880 [00:00<00:15, 1695849.46it/s]

5%|5 | 1376256/26421880 [00:00<00:10, 2383907.04it/s]

8%|7 | 2031616/26421880 [00:00<00:08, 2833597.66it/s]

11%|# | 2785280/26421880 [00:01<00:07, 3287253.22it/s]

14%|#3 | 3604480/26421880 [00:01<00:06, 3693683.83it/s]

17%|#7 | 4521984/26421880 [00:01<00:05, 4129253.47it/s]

21%|## | 5537792/26421880 [00:01<00:04, 4594033.50it/s]

25%|##5 | 6619136/26421880 [00:01<00:03, 5381398.55it/s]

27%|##7 | 7241728/26421880 [00:01<00:03, 5231545.35it/s]

32%|###2 | 8519680/26421880 [00:02<00:03, 5847784.06it/s]

38%|###7 | 9928704/26421880 [00:02<00:02, 6478321.23it/s]

43%|####3 | 11468800/26421880 [00:02<00:01, 7646942.32it/s]

47%|####6 | 12320768/26421880 [00:02<00:01, 7378381.80it/s]

53%|#####3 | 14123008/26421880 [00:02<00:01, 8242768.30it/s]

61%|######1 | 16121856/26421880 [00:02<00:01, 9139770.60it/s]

69%|######9 | 18284544/26421880 [00:03<00:00, 10811927.66it/s]

74%|#######3 | 19464192/26421880 [00:03<00:00, 10383703.22it/s]

83%|########2 | 21823488/26421880 [00:03<00:00, 13320382.63it/s]

88%|########8 | 23330816/26421880 [00:03<00:00, 11733457.46it/s]

98%|#########8| 26017792/26421880 [00:03<00:00, 15167532.20it/s]

100%|##########| 26421880/26421880 [00:03<00:00, 7298482.36it/s]

Extracting data/FashionMNIST/raw/train-images-idx3-ubyte.gz to data/FashionMNIST/raw

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/train-labels-idx1-ubyte.gz

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/train-labels-idx1-ubyte.gz to data/FashionMNIST/raw/train-labels-idx1-ubyte.gz

0%| | 0/29515 [00:00<?, ?it/s]

100%|##########| 29515/29515 [00:00<00:00, 162589.35it/s]

100%|##########| 29515/29515 [00:00<00:00, 162114.12it/s]

Extracting data/FashionMNIST/raw/train-labels-idx1-ubyte.gz to data/FashionMNIST/raw

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/t10k-images-idx3-ubyte.gz

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/t10k-images-idx3-ubyte.gz to data/FashionMNIST/raw/t10k-images-idx3-ubyte.gz

0%| | 0/4422102 [00:00<?, ?it/s]

1%|1 | 65536/4422102 [00:00<00:11, 365605.95it/s]

4%|4 | 196608/4422102 [00:00<00:05, 784148.65it/s]

11%|#1 | 491520/4422102 [00:00<00:03, 1277727.66it/s]

36%|###6 | 1605632/4422102 [00:00<00:00, 4270342.38it/s]

87%|########6 | 3833856/4422102 [00:00<00:00, 8021770.08it/s]

100%|##########| 4422102/4422102 [00:00<00:00, 6137211.12it/s]

Extracting data/FashionMNIST/raw/t10k-images-idx3-ubyte.gz to data/FashionMNIST/raw

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/t10k-labels-idx1-ubyte.gz

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/t10k-labels-idx1-ubyte.gz to data/FashionMNIST/raw/t10k-labels-idx1-ubyte.gz

0%| | 0/5148 [00:00<?, ?it/s]

100%|##########| 5148/5148 [00:00<00:00, 32666077.14it/s]

Extracting data/FashionMNIST/raw/t10k-labels-idx1-ubyte.gz to data/FashionMNIST/raw

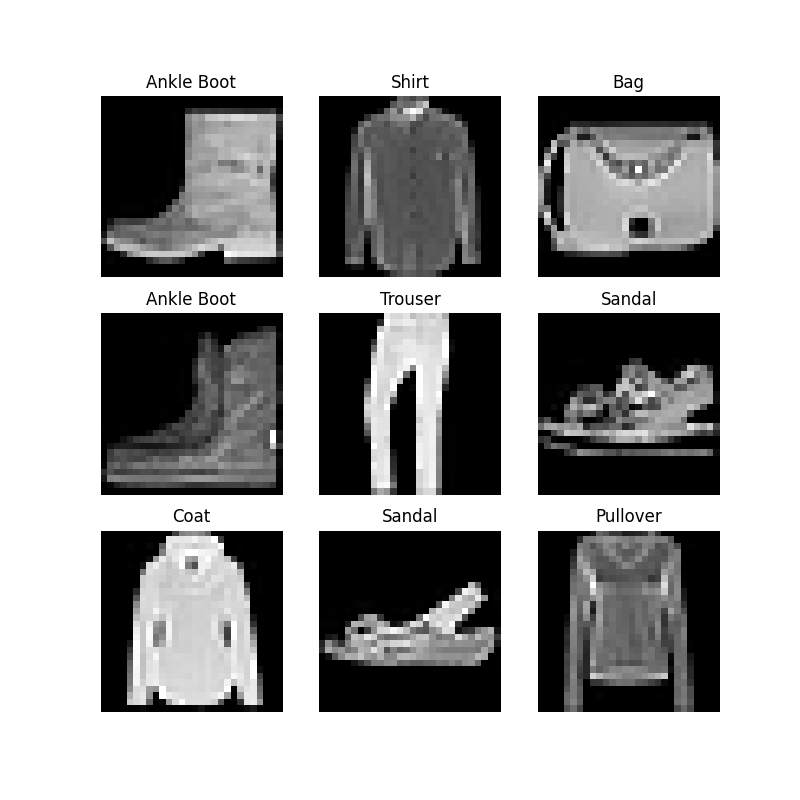

Iterating and Visualizing the Dataset¶

We can index Datasets manually like a list: training_data[index].

We use matplotlib to visualize some samples in our training data.

labels_map = {

0: "T-Shirt",

1: "Trouser",

2: "Pullover",

3: "Dress",

4: "Coat",

5: "Sandal",

6: "Shirt",

7: "Sneaker",

8: "Bag",

9: "Ankle Boot",

}

figure = plt.figure(figsize=(8, 8))

cols, rows = 3, 3

for i in range(1, cols * rows + 1):

sample_idx = torch.randint(len(training_data), size=(1,)).item()

img, label = training_data[sample_idx]

figure.add_subplot(rows, cols, i)

plt.title(labels_map[label])

plt.axis("off")

plt.imshow(img.squeeze(), cmap="gray")

plt.show()

Creating a Custom Dataset for your files¶

A custom Dataset class must implement three functions: __init__, __len__, and __getitem__.

Take a look at this implementation; the FashionMNIST images are stored

in a directory img_dir, and their labels are stored separately in a CSV file annotations_file.

In the next sections, we’ll break down what’s happening in each of these functions.

import os

import pandas as pd

from torchvision.io import read_image

class CustomImageDataset(Dataset):

def __init__(self, annotations_file, img_dir, transform=None, target_transform=None):

self.img_labels = pd.read_csv(annotations_file)

self.img_dir = img_dir

self.transform = transform

self.target_transform = target_transform

def __len__(self):

return len(self.img_labels)

def __getitem__(self, idx):

img_path = os.path.join(self.img_dir, self.img_labels.iloc[idx, 0])

image = read_image(img_path)

label = self.img_labels.iloc[idx, 1]

if self.transform:

image = self.transform(image)

if self.target_transform:

label = self.target_transform(label)

return image, label

__init__¶

The __init__ function is run once when instantiating the Dataset object. We initialize the directory containing the images, the annotations file, and both transforms (covered in more detail in the next section).

The labels.csv file looks like:

tshirt1.jpg, 0

tshirt2.jpg, 0

......

ankleboot999.jpg, 9

def __init__(self, annotations_file, img_dir, transform=None, target_transform=None):

self.img_labels = pd.read_csv(annotations_file)

self.img_dir = img_dir

self.transform = transform

self.target_transform = target_transform

__len__¶

The __len__ function returns the number of samples in our dataset.

Example:

def __len__(self):

return len(self.img_labels)

__getitem__¶

The __getitem__ function loads and returns a sample from the dataset at the given index idx.

Based on the index, it identifies the image’s location on disk, converts that to a tensor using read_image, retrieves the

corresponding label from the csv data in self.img_labels, calls the transform functions on them (if applicable), and returns the

tensor image and corresponding label in a tuple.

def __getitem__(self, idx):

img_path = os.path.join(self.img_dir, self.img_labels.iloc[idx, 0])

image = read_image(img_path)

label = self.img_labels.iloc[idx, 1]

if self.transform:

image = self.transform(image)

if self.target_transform:

label = self.target_transform(label)

return image, label

Preparing your data for training with DataLoaders¶

The Dataset retrieves our dataset’s features and labels one sample at a time. While training a model, we typically want to

pass samples in “minibatches”, reshuffle the data at every epoch to reduce model overfitting, and use Python’s multiprocessing to

speed up data retrieval.

DataLoader is an iterable that abstracts this complexity for us in an easy API.

from torch.utils.data import DataLoader

train_dataloader = DataLoader(training_data, batch_size=64, shuffle=True)

test_dataloader = DataLoader(test_data, batch_size=64, shuffle=True)

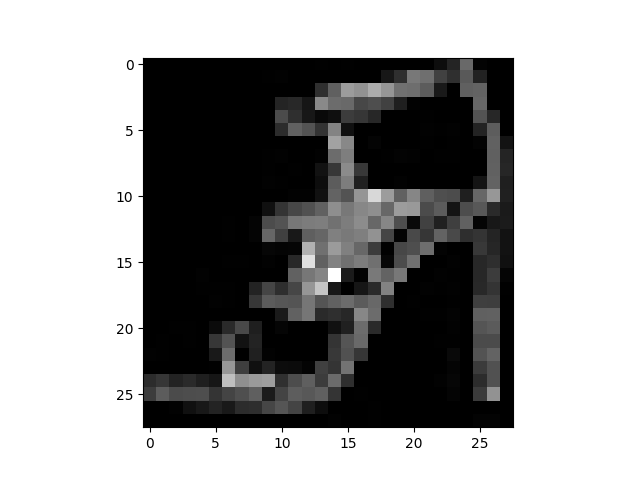

Iterate through the DataLoader¶

We have loaded that dataset into the DataLoader and can iterate through the dataset as needed.

Each iteration below returns a batch of train_features and train_labels (containing batch_size=64 features and labels respectively).

Because we specified shuffle=True, after we iterate over all batches the data is shuffled (for finer-grained control over

the data loading order, take a look at Samplers).

# Display image and label.

train_features, train_labels = next(iter(train_dataloader))

print(f"Feature batch shape: {train_features.size()}")

print(f"Labels batch shape: {train_labels.size()}")

img = train_features[0].squeeze()

label = train_labels[0]

plt.imshow(img, cmap="gray")

plt.show()

print(f"Label: {label}")

Feature batch shape: torch.Size([64, 1, 28, 28])

Labels batch shape: torch.Size([64])

Label: 5

Further Reading¶

Total running time of the script: ( 0 minutes 7.406 seconds)